Key Takeaways

- AI compliance in finance covers legal, ethical, and governance duties across the full AI lifecycle, from data use to ongoing monitoring.

- The EU AI Act, GDPR, and Irish financial regulation set clear expectations around transparency, fairness, accountability, and risk control.

- Non-compliance exposes firms to fines, operational disruption, reputational damage, and loss of trust.

- Adopting recognised standards and strong security controls helps firms use AI safely while meeting regulatory obligations.

AI offers financial planners and advisers an incredible opportunity to streamline operations and analyse data at unprecedented speeds. However, for many in the industry, adopting these tools creates a significant dilemma. As a financial advisor, you have probably thought about how you can embrace the innovation and efficiency that come alongside AI without crossing the line into regulatory non-compliance.

With the introduction of the EU AI Act, alongside existing GDPR and Irish financial regulations, the stakes are rising. It is becoming clear that professionals should tread carefully when using AI and ensure they comply with best practices. If you fail to understand the ethical and legal nuances of these new rules, your practice could be exposed to operational risks that threaten not only your reputation but your licence to operate.

However, AI compliance shouldn't be viewed as a barrier or just "another legal requirement" but rather as a framework that ensures you deliver high-quality service while using AI systems ethically. It protects clients from unfair or unsafe outcomes - and helps organisations reduce regulatory and operational risks.

Our blog post breaks down exactly what finance professionals need to know - from the EU AI Act to practical implementation strategies. Read on to discover how to use AI tools confidently, ensuring you remain compliant while delivering efficient, top-tier financial services to your clients.

What Is AI Compliance in Finance?

AI compliance means creating, using, and managing artificial intelligence systems in line with legal, ethical, and regulatory expectations. It covers data collection, model development, performance testing, deployment, and ongoing monitoring.

A compliant AI system:

- uses lawful and reliable data

- explains how it works

- avoids unfair outcomes

- documents important decisions

- keeps people responsible for oversight

In practice, AI compliance sits where technology, governance, and ethics meet. Every team plays a part, from data science to senior leadership.

Why Does AI Compliance Matter Today?

AI now supports decisions across finance, education, healthcare, employment and public services. This creates value for organisations, but it also introduces serious risks.

Non-compliance can lead to:

- major fines

- public complaints

- influence damage

- unsafe or biased outcomes

- cybersecurity vulnerabilities

- a loss of customer trust

Regulators in Europe, the UK and Ireland expect stronger controls on AI. Customers expect fairness and transparency. Organisations that prepare early protect themselves from legal risk and build long-term credibility.

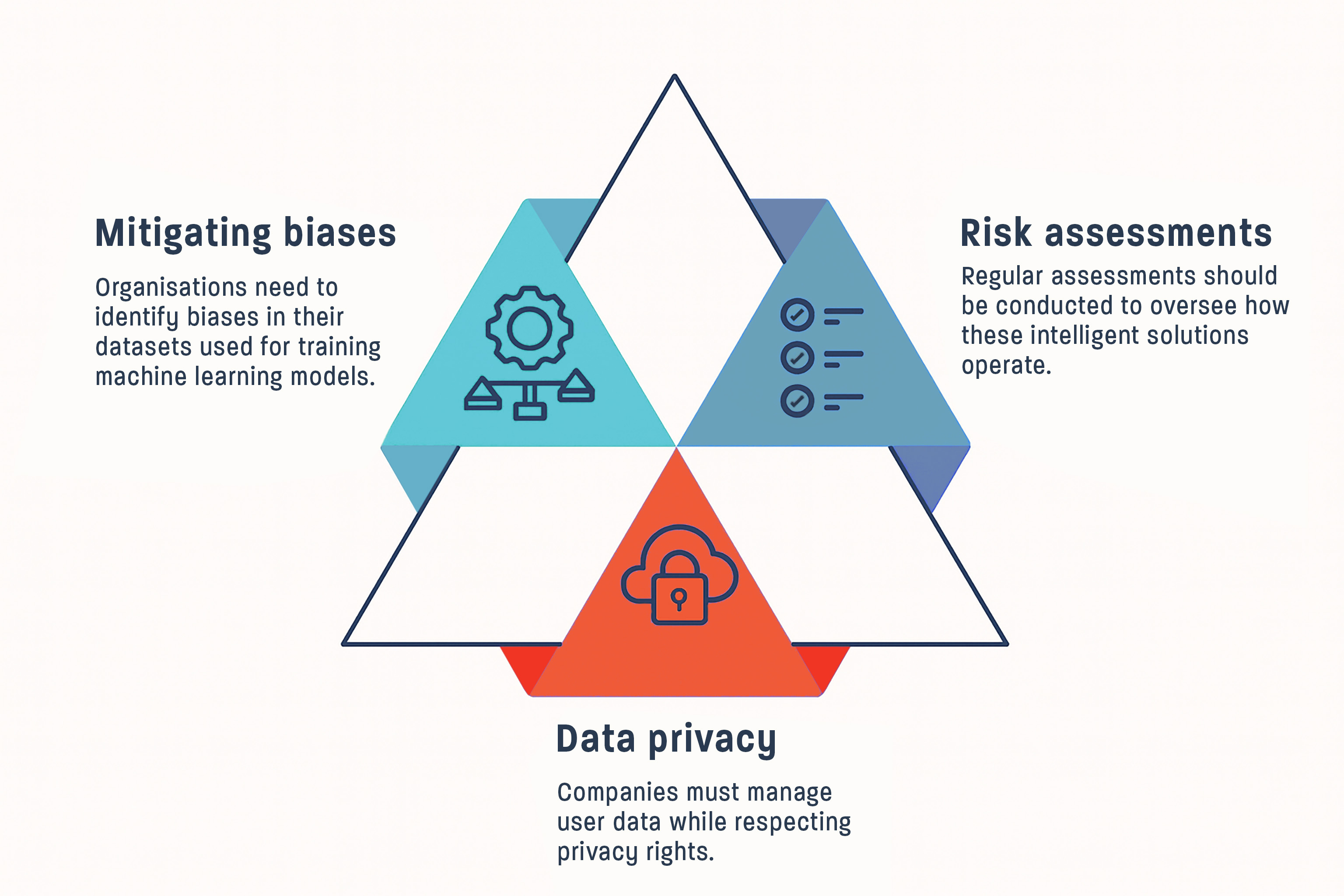

Core Principles and Components of AI Compliance

Transparency means users should understand how an AI system works. Organisations explain the purpose, data sources, logic, and limitations of their models in clear language.

Fairness means the system avoids biased decisions. Teams test for uneven outcomes and retrain models when needed. They use representative data to check how predictions behave across groups.

Accountability means people remain responsible for decisions supported by AI. Each system has a named owner, a documented approval process, and a clear escalation path when issues arise.

These principles guide every step of the AI lifecycle.

What is the Legal and Regulatory Landscape?

The regulatory environment in Europe drives global standards. Key frameworks include:

- the EU AI Act

- the UK’s sector-led AI governance approach

- guidance from the Irish Data Protection Commission

- GDPR obligations around data protection and automated decision-making

These rules expect clear documentation, risk management, transparency, and strong user protections. Organisations in Ireland and the UK that work with European partners must meet these expectations too.

The United States provides a useful comparison, but businesses here follow European requirements first.

What is the Role of Cybersecurity in AI Compliance?

Cybersecurity is central to AI compliance. Weak security creates legal risk and undermines trust.

Strong AI security includes:

- restricting access to training data and model files

- versioning data to protect integrity

- monitoring for unusual activity

- logging inputs, outputs, and key actions

- defending against adversarial attacks or model poisoning

Security protects both the system and the people who rely on it. It also supports GDPR and EU AI Act obligations around data protection, incident response, and accountability.

Organisations can use standard IT security rules (like ISO or NIST) to prove to their clients and regulators that they manage AI risks consistently across different models, data, and technical systems.

The Regulatory Landscape for AI Compliance in the EU

What are the Consequences for Non-Compliance Under the European AI Act?

The EU AI Act uses a risk-based system and introduces significant penalties.

It places strict rules on “high‑risk” AI systems, especially those used in finance, employment, credit decisions, and access to essential services.

Fines can reach:

- up to €35 million or 7 percent of global turnover for banned uses

- up to €15 million or 3 percent of global turnover for other serious violations

- up to €7.5 million or 1 percent of global turnover for providing incorrect information

Beyond financial penalties, regulators can also order systems to be withdrawn from the market or suspended until they comply with the necessary requirements. This can disrupt business operations and cause serious reputational damage.

High-risk systems must meet strict requirements. These include clear technical documentation through testing and verification, effective communication with users, ongoing performance monitoring, and reporting incidents when problems arise. Organisations in Ireland and the UK that serve European markets should follow these rules if their AI tools fall within the Act’s scope. Even if the systems are developed or hosted outside the EU.

How Does the Regulatory Landscape for AI Compliance Differ in the United States?

The United States does not use a single AI law, but federal actions focus on safe and trustworthy AI. These include national guidance for risk control and stronger oversight of AI in public services. In addition, there is an increased use of existing laws on discrimination, consumer protection, and financial regulation to address harmful AI practices.

Even without specific AI laws, federal agencies (like the FTC and CFPB) can use existing laws to penalise companies if their AI systems are misleading, unfair, or dangerous.

For European organisations, this framework is not purely contextual. If a European firm operates in the US market or targets US consumers, it may face direct US legal exposure under both federal and state laws (Congress.gov, Norton Rose Fulbright).

EU and UK expectations differ: EU rules are generally more detailed and prescriptive, while the UK follows a more principles-led and sector-based approach (GOV.UK).

US federal policy remains in flux. The White House has signalled an intention to develop a national framework through an executive order that seeks to align or challenge state-level AI laws. This supports the need for organisations to track developments, but it also shows that the US landscape is less stable than it might appear.

For European organisations, the central focus remains on EU and UK expectations, but awareness of US exposure is essential if they operate across markets.

Implementing AI Compliance across Financial Organisations

What are the Key Compliance Certification and Training You Should Consider?

Financial Training builds a strong compliance culture. Staff across product, engineering, leadership, and customer services need a shared understanding of responsibilities.

Useful standards include:

- ISO/IEC 42001 for AI management systems

- ISO/IEC 23894 for AI risk management guidance

- ISO/IEC 5338 for AI system life cycle processes (engineering lifecycle control that supports governance)

- ISO/IEC 31700 for privacy-by-design

- ISO/IEC 27001 for information security

- the NIST AI Risk Management Framework for practical risk controls

These frameworks help organisations design, test, and monitor AI responsibly. They also provide evidence to regulators, clients, and partners that AI is being managed in a structured, consistent, and internationally recognised way.

Out of the listed ISO/IEC 42001 could be perceived as the most critical standard because it acts as the "umbrella" framework for your entire AI strategy. As the world's first internationally certifiable standard for AI Management Systems, it allows financial firms to prove to regulators and auditors that they have a structured, safe, and accountable system for governing AI, rather than just isolated technical controls.

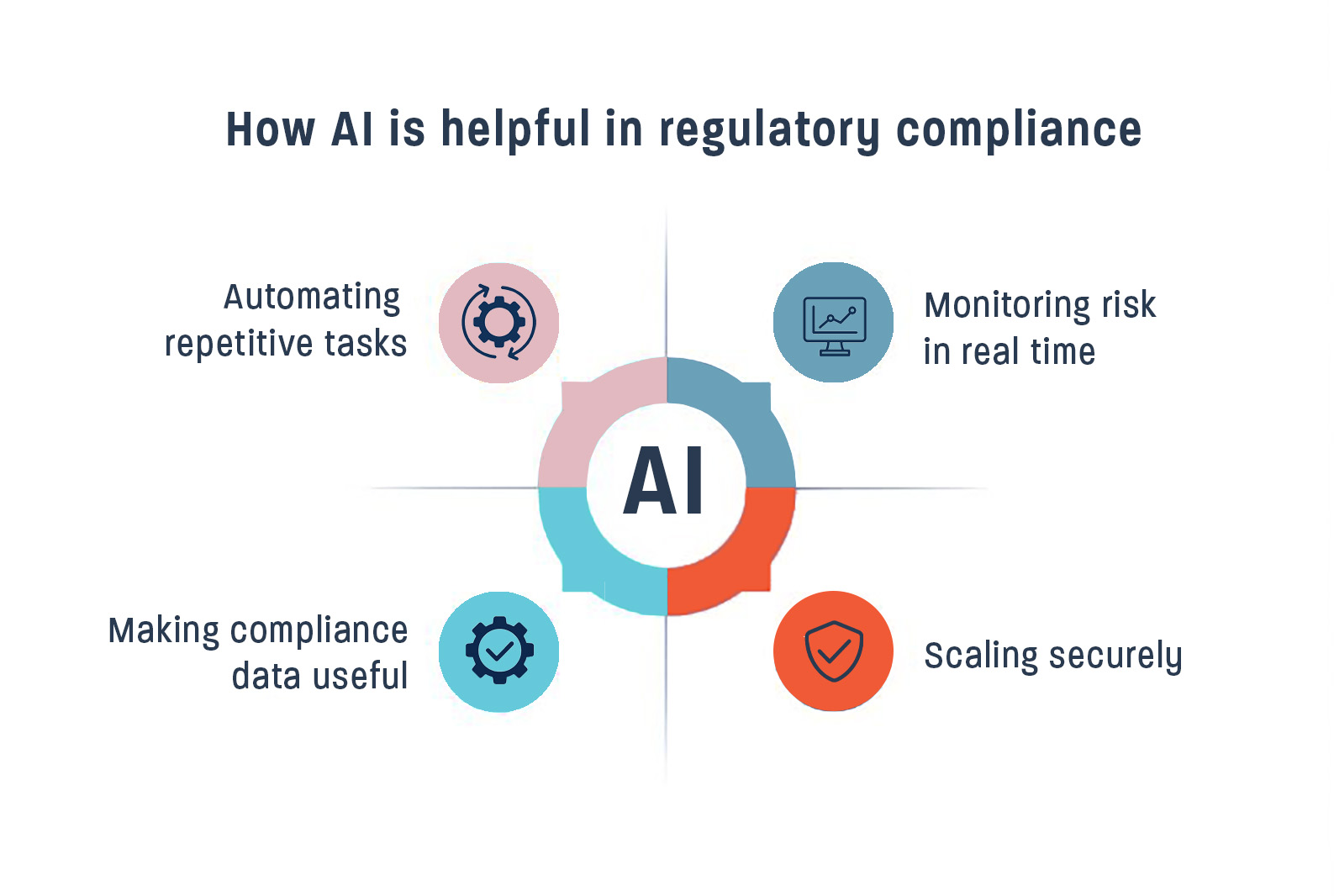

List of Tools and Technologies for AI Compliance

Effective tools support consistency and reduce risk.

Helpful solutions include:

- AI system inventories

- policy enforcement tools

- fairness, bias and robustness testing tools

- model monitoring dashboards

- audit logs for traceability

- third-party risk and vendor assessment tools

These tools support organisations as regulations evolve.

What to Expect in the Future? The Evolving Landscape of AI Compliance

AI regulation will expand across Europe, the UK and Ireland. Customers will expect more transparency, clearer explanations, and stronger safeguards. Organisations will need to maintain detailed documentation, monitor models closely, and update systems regularly.

Certification under ISO/IEC 42001 will become more common. Companies that act early will adapt more smoothly to new obligations and build deeper trust with customers.

Conclusion

AI is now embedded in financial services, and regulators expect firms to manage it with the same discipline as any other high-risk activity. Compliance with the EU AI Act, GDPR, and Irish regulatory requirements protects clients while reducing legal and operational risk. Organisations that invest early in governance, training, and recognised standards will be best placed to use AI confidently and sustainably.

Organisations document their systems, test fairness, ensure transparency, maintain human oversight, protect data, and monitor performance throughout the lifecycle.

Governance, risk management, data and model quality, transparency with oversight, and continuous monitoring.

An AI compliance lawyer helps organisations understand regulations, complete risk assessments, prepare documentation and meet legal obligations.

Standards include ISO/IEC 42001, ISO/IEC 31700, ISO/IEC 5338, ISO/IEC 27001, and the NIST AI RMF.

Context, compliance, consent, correctness, calibration, continuity, and control.

It sets strict rules for high-risk systems and introduces significant fines. Businesses must test, document, and monitor their AI systems.

Under the EU AI Act, high-risk AI covers credit scoring, employment, education tools - and systems for essential services. AI for investment advice, risk-profiling and suitability are not listed as high risk. But are supervised under MiFID II, the Consumer Protection Code and the Central Bank of Ireland.

Organisations document system purpose, data sources, testing results, monitoring logs, incidents and updates.

They can create an AI inventory, adopt recognised standards, train staff, test systems regularly, and maintain thorough documentation.

AI governance provides the internal structure and policies. AI compliance ensures the organisation meets legal and regulatory requirements.

References:

-

European Commission, EU AI Act

-

EU AI Act penalties

-

Irish Data Protection Commission guidance

-

UK AI governance approach

-

Executive Order 14110 (context only)

-

ISO/IEC 42001

-

ISO/IEC 5338

-

ISO/IEC 31700

-

ISO/IEC 27001

-

NIST AI RMF

-

Google Search Central advice on helpful content

Author -

Scott Wilkinson, LIA Lecturer for AI & Head of Culture and Coaching at Brightbea